06 Mar Streamlining cell encapsulation with AI and microfluidics

Encapsulation of cells inside microfluidic droplets is an important step in several cellular analysis applications. However, the lack of full control of experimental variables and conditions in droplet microfluidics can make it difficult to achieve Poisson distribution, which is the theoretical expectation for encapsulation statistics. Therefore, an automated droplet and cell detector is necessary for detecting droplets and cell count enumeration within droplets, and adjusting experimental conditions based on process control feedback. This week’s research highlight features an article by researchers at Texas Tech University who have developed a novel approach for automated droplet and cell detection. They use a deep learning object detector called You Only Look Once (YOLO), an influential class of object detectors with several benefits over traditional methods. The study investigates the application of both YOLOv3 and YOLOv5 object detectors in the development of an automated droplet and cell detector.

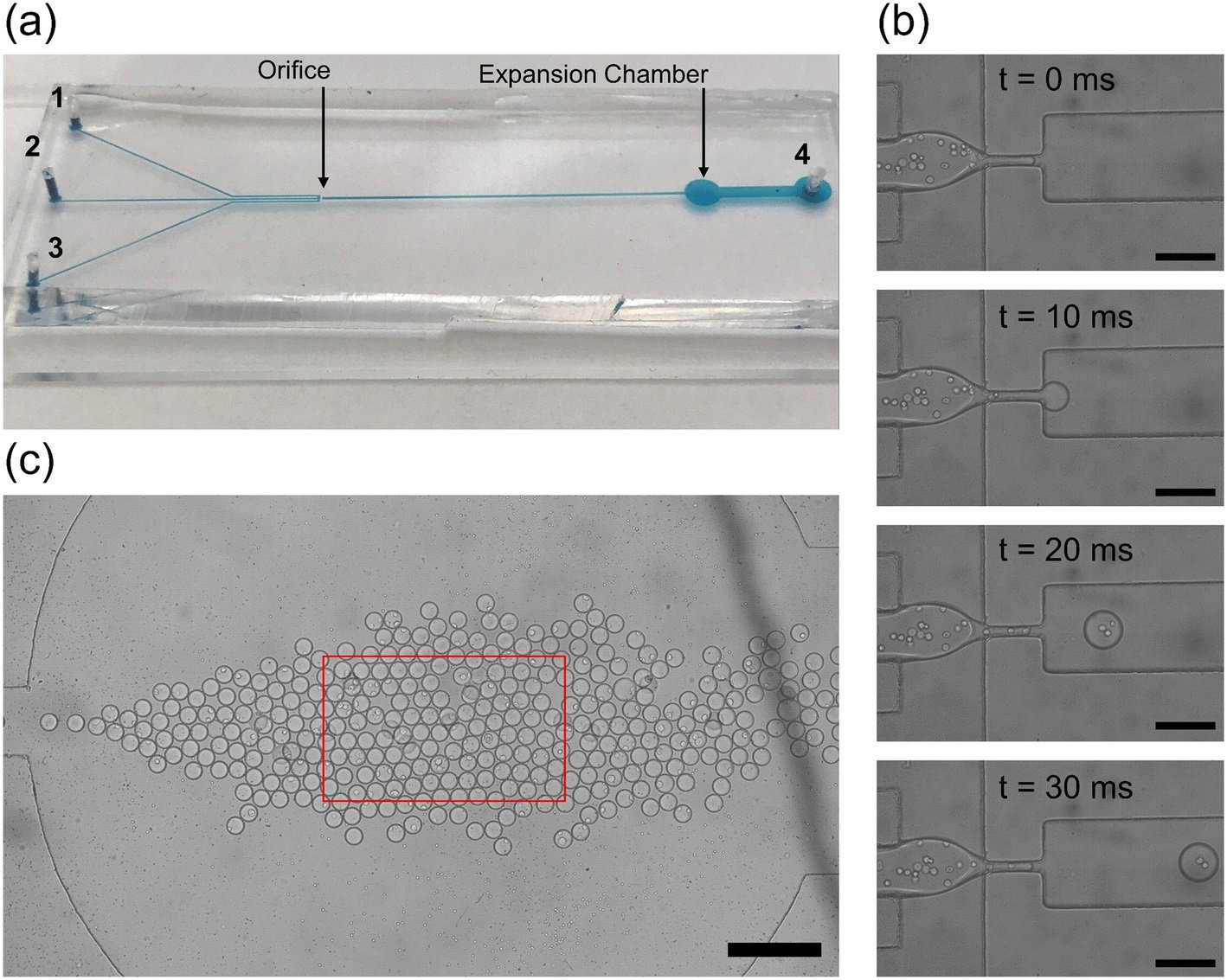

“(a) The physical microfluidic device used for droplet generation. The continuous phase inlets (1, 2) and discrete phase inlet (3) are marked on the left with the outlet of the device on the right (4). The orifice and expansion chamber are labeled with black arrows. (b) A zoomed in view of the droplet generation process. The white circles in the discrete phase are PC3 cancer cells in aqueous suspension. Images are spaced at 10 ms so that the shearing at the orifice and droplet generation is visualized. Individual droplets take only 30 ms to pass allowing for high throughput droplet generation. Scale bar: 150 μm. (c) The expansion chamber of the droplet generator device with droplets tightly packed together. The red box indicates the microscope visualization box displayed on the computer. Scale bar: 400 μm.” Reproduced from K. Gardner, M. M. Uddin, L. Tran, T. Pham, S. Vanapalli and W. Li, Lab Chip, 2022, 22, 4067 DOI: 10.1039/D2LC00462C with permission from the Royal Society of Chemistry.

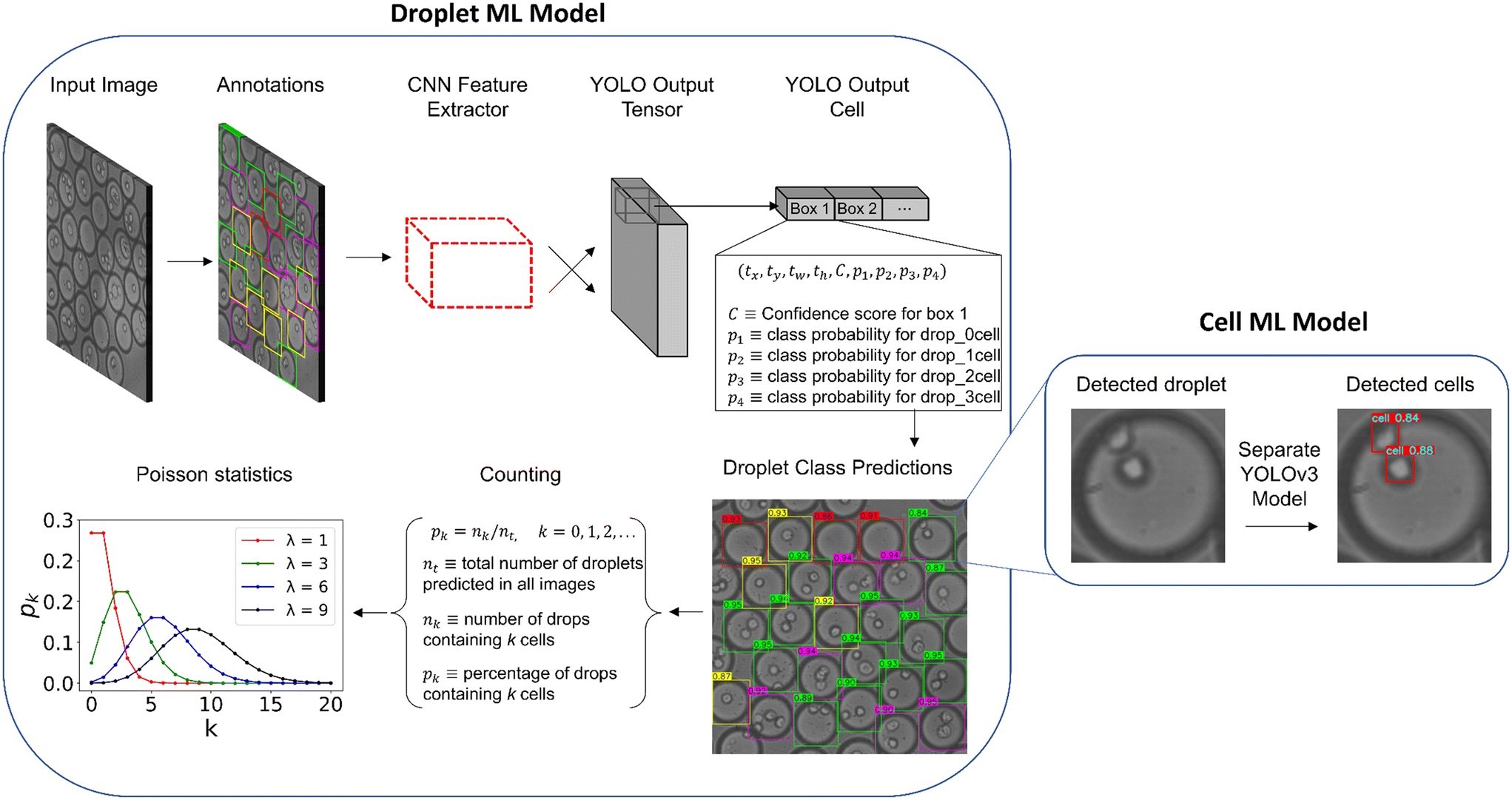

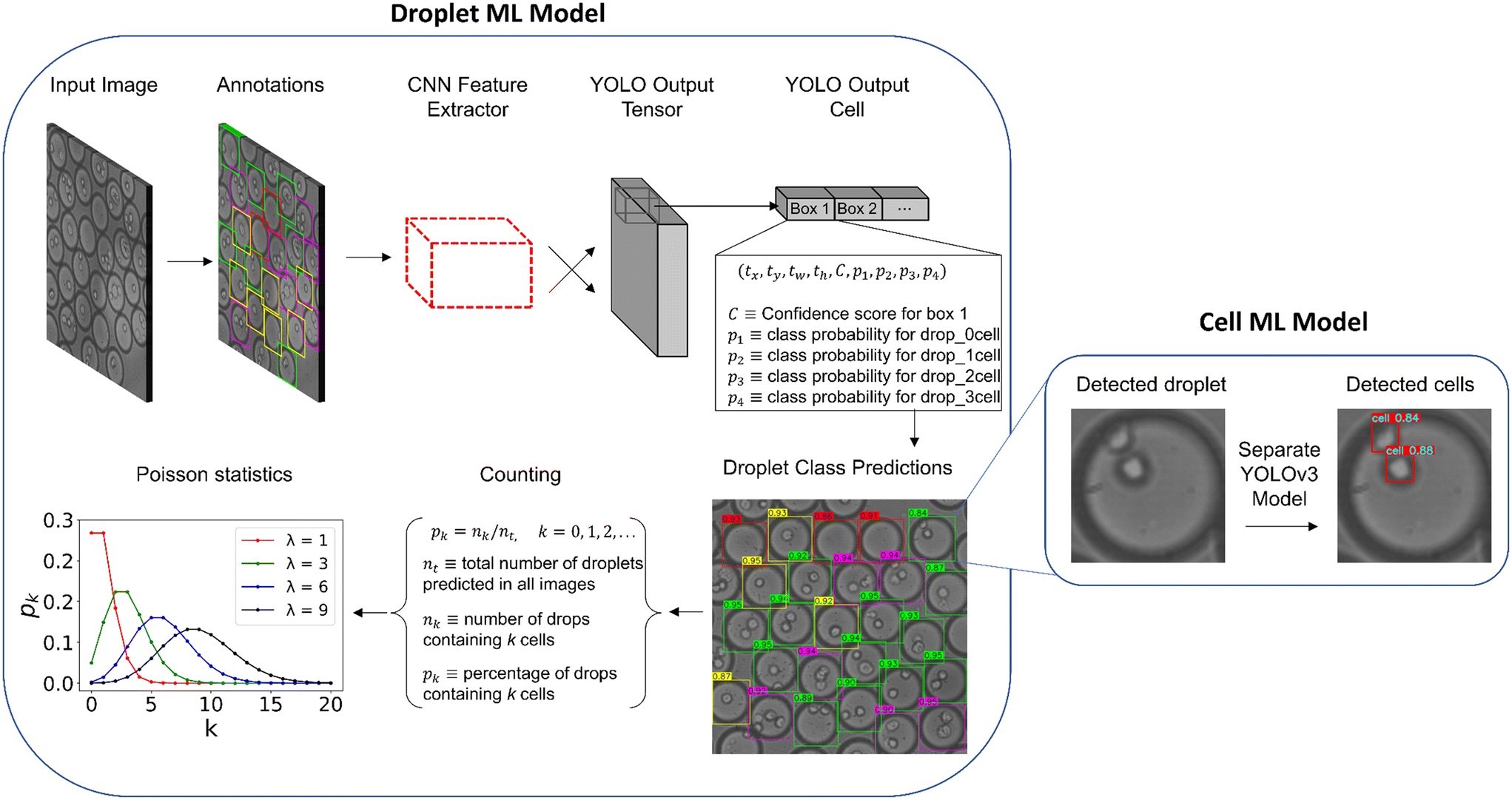

In this study, the researchers utilized a deep learning object detector called You Only Look Once (YOLO), a popular class of object detectors with several advantages over traditional methods. YOLOv3 and YOLOv5 architectures were applied to assemble an automated detector of whole droplets and the individual cells inside these droplets. For those unfamiliar with YOLO, it is a neural network architecture for object detection that has gained popularity in recent years due to its speed and accuracy. Unlike other object detection methods, which first propose regions in an image and then classify each region, YOLO divides the image into a grid and predicts the bounding boxes and class probabilities for each cell in the grid simultaneously. This means that YOLO can detect multiple objects in an image in a single forward pass, making it much faster than other object detection methods. By applying YOLO to microfluidic experiments, the research team was able to develop an automated detector for droplets and cells, which could have a significant impact on cellular analysis applications.

“Machine learning workflow for detecting cell-laden droplets and encapsulated cells. The left displays the steps taken to train and test the droplet model including the bounding box annotations, YOLO architecture, and droplet counting. The right displays the cell model after the droplet predictions are cropped and resized. Predictions are illustrated for both droplet and cell models for an example image in the production set. The numbers displayed on the colored boxes indicate the confidence values of the predictions.” Reproduced from K. Gardner, M. M. Uddin, L. Tran, T. Pham, S. Vanapalli and W. Li, Lab Chip, 2022, 22, 4067 DOI: 10.1039/D2LC00462C with permission from the Royal Society of Chemistry.

The experimental data for this study was obtained from a microfluidic flow focusing device with a dispersed phase of cancer cells. The microfluidic device contained an expansion chamber downstream of the droplet generator, allowing for visualization and recording of cell-encapsulated droplet images. A droplet bounding box was predicted, then cropped from the original image for individual cell detection through a separate model for further examination.

To validate and test the model, precision and recall were utilized as metric, resulting in a high mean average precision (mAP) for an accurate droplet detector. The trained models were tested with two sets of 50 sequential images, and compared to hand-counted ratios. The results showed that the object detection droplet proportions were nearly identical to manually counting the droplets by hand, confirming operation close to human level performance.

The YOLOv5 architecture was found to outperform YOLOv3 in inference time and training stability, making it more suitable for production applications. This finding shows that both algorithms can detect microfluidic droplets with fast inference and high accuracy. More importantly, the YOLOv5 architecture has shown to be robust in microfluidic applications as well as slightly outperforming the YOLOv3 model in speed, therefore, alleviating some of the controversy associated with the PyTorch model.

Figures are reproduced from K. Gardner, M. M. Uddin, L. Tran, T. Pham, S. Vanapalli and W. Li, Lab Chip, 2022, 22, 4067 DOI: 10.1039/D2LC00462C with permission from the Royal Society of Chemistry.

Read the original article: Deep learning detector for high precision monitoring of cell encapsulation statistics in microfluidic droplets